-

4 Steps to Optimize Your LinkedIn Profile for Sales Prospecting

When you’re prospecting for new leads on LinkedIn, it’s crucial to optimize your profile to engage […]

-

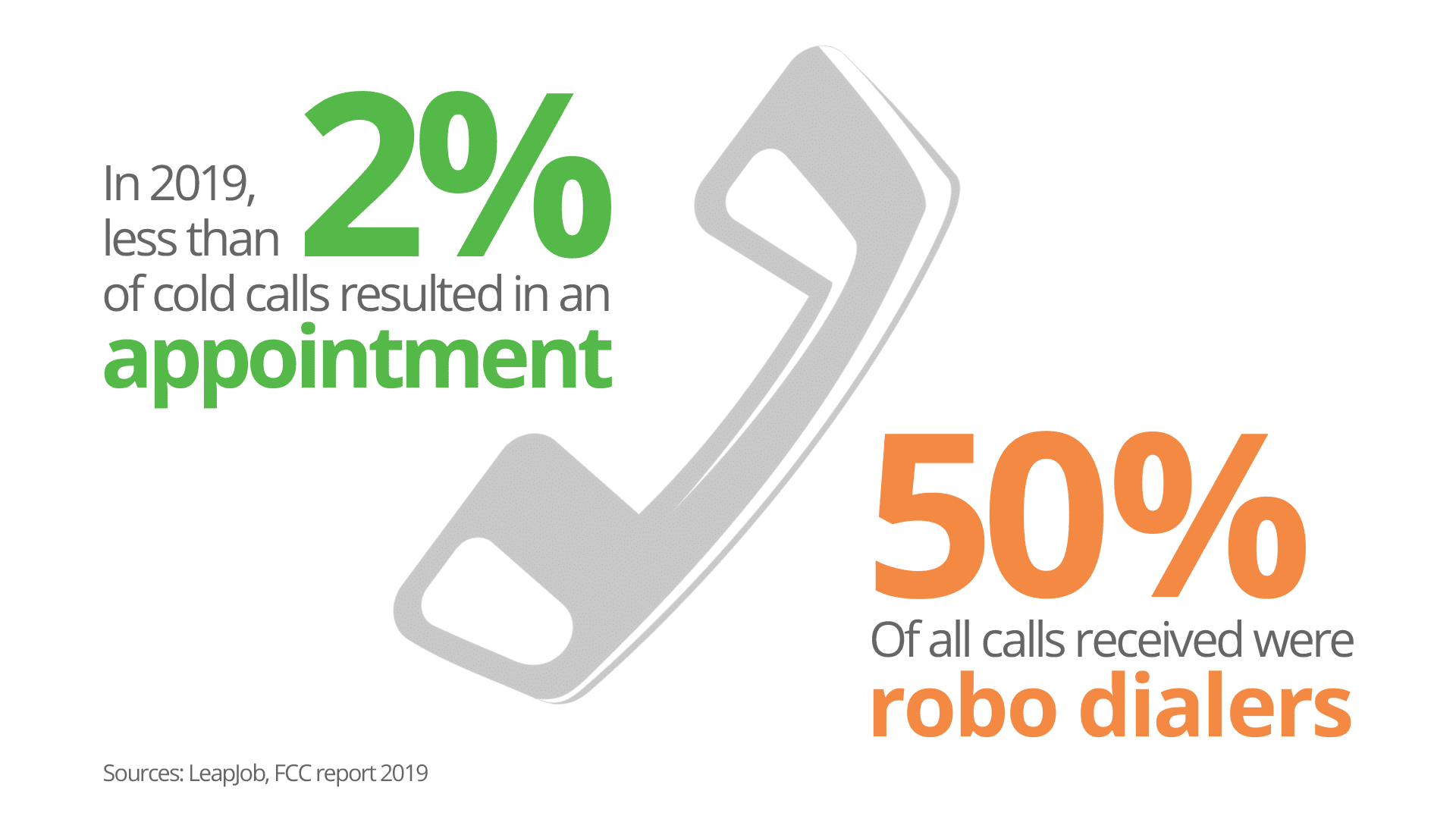

The Reality of Cold Calling for B2B Sales

When you’re thinking about your 2021 sales goals, you’re probably considering which methods are most […]

-

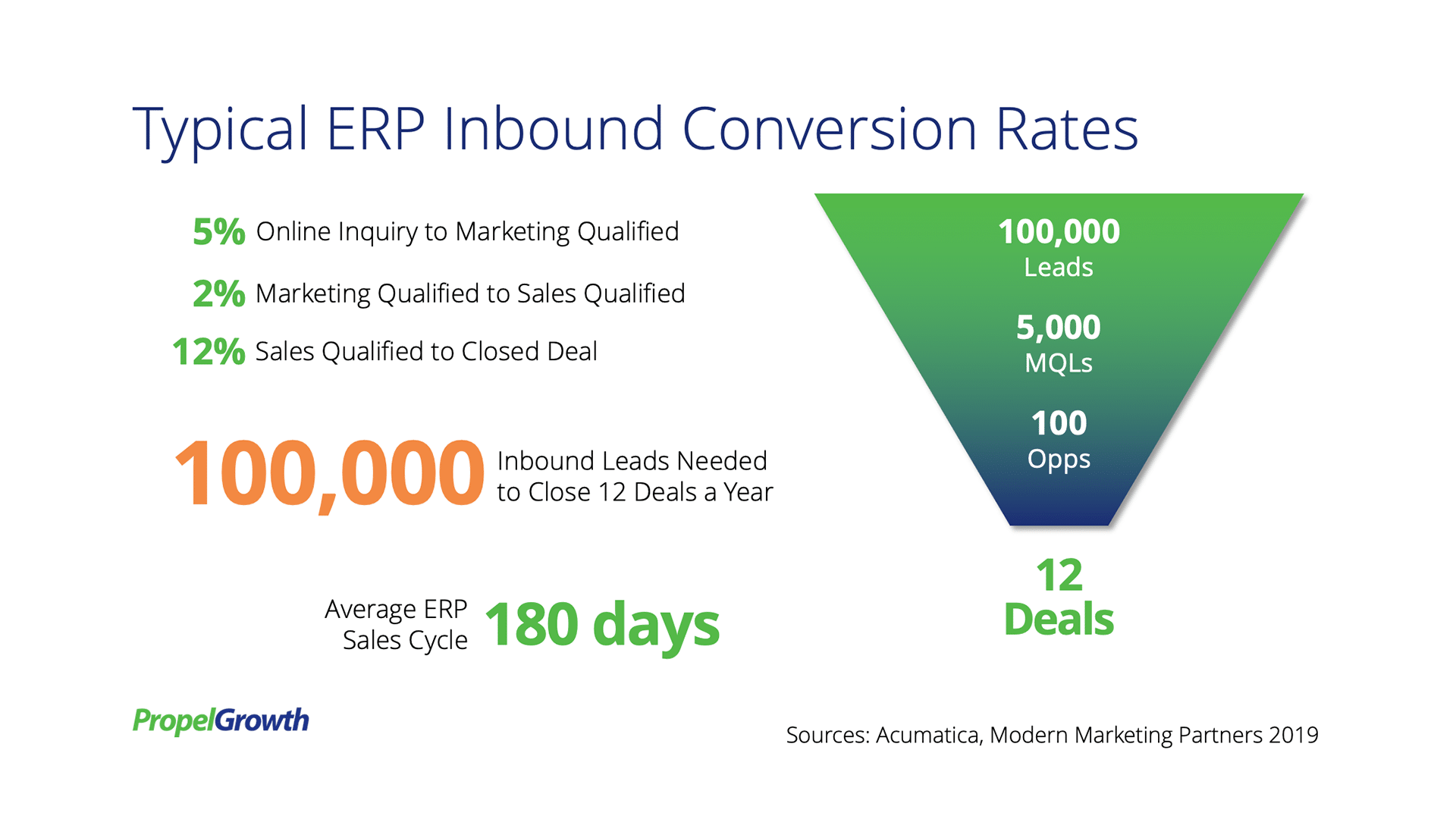

Can Inbound Marketing Generate Enough Leads?

It’s a new year, and hopefully, it will be a better one, with business picking […]

-

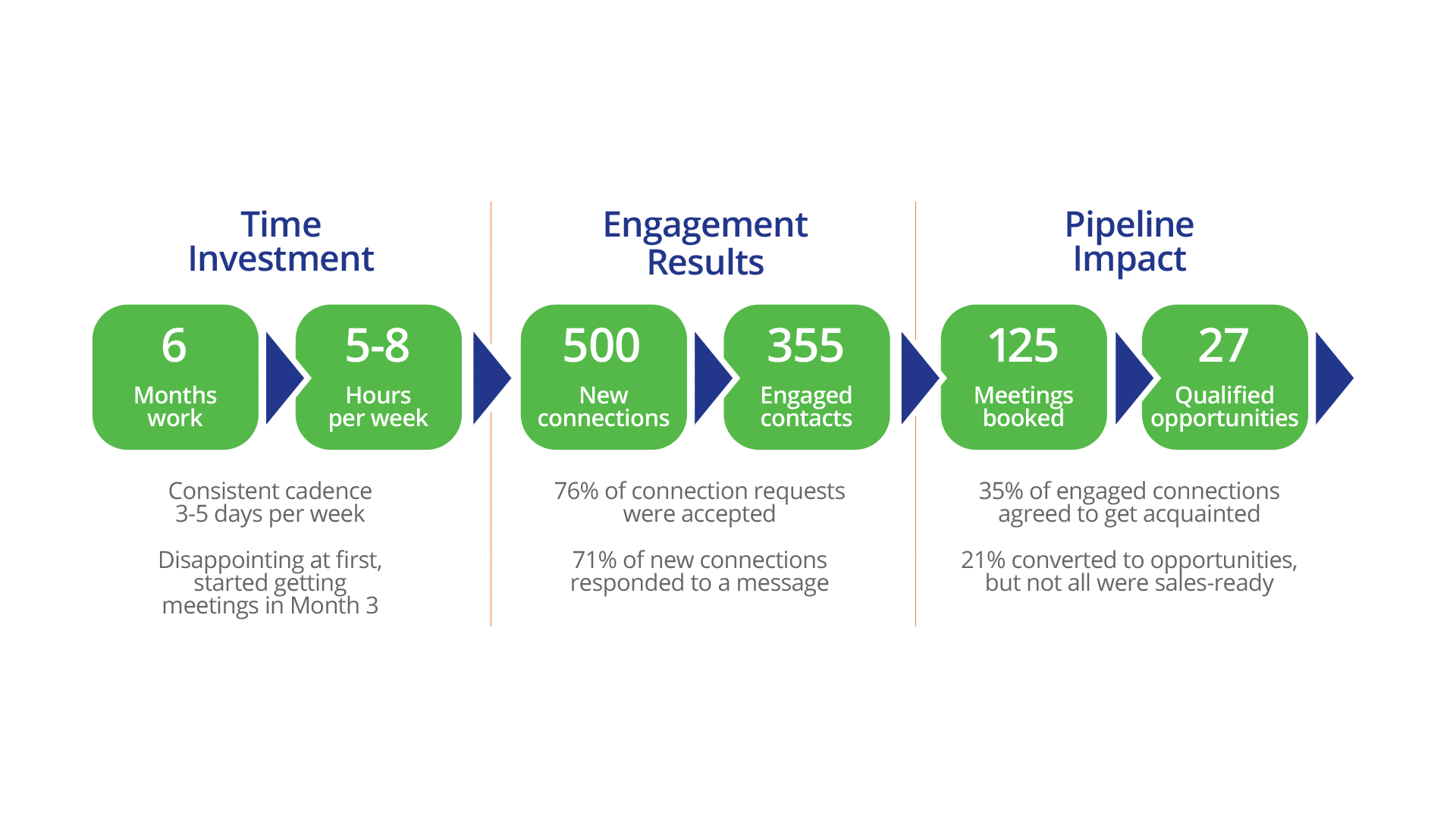

How to Fill Your Sales Pipeline Using LinkedIn for Prospecting

When I started PropelGrowth in 2007, I was able to leverage a network and reputation […]

-

How to Win 74% of Your ERP Deals

Studies show that 74% of B2B technology deals go to the seller who brings the […]

-

Buyer Persona Research, the Key to Niche Marketing

The Company That Understands The Customer Best, Wins Many companies believe that buyer persona (or […]

-

8 Ways a Niche Strategy Improves the Value of Your Practice

A niche strategy can have a dramatic impact on the growth of your Acumatica practice. […]

-

Acumatica VARs – Spending Money on Marketing with No Results?

Creating targeted content for a specific audience makes a huge difference in marketing results. Last […]